DeepSeek Launches V4 Models Matching Top AI Rivals at Low Cost

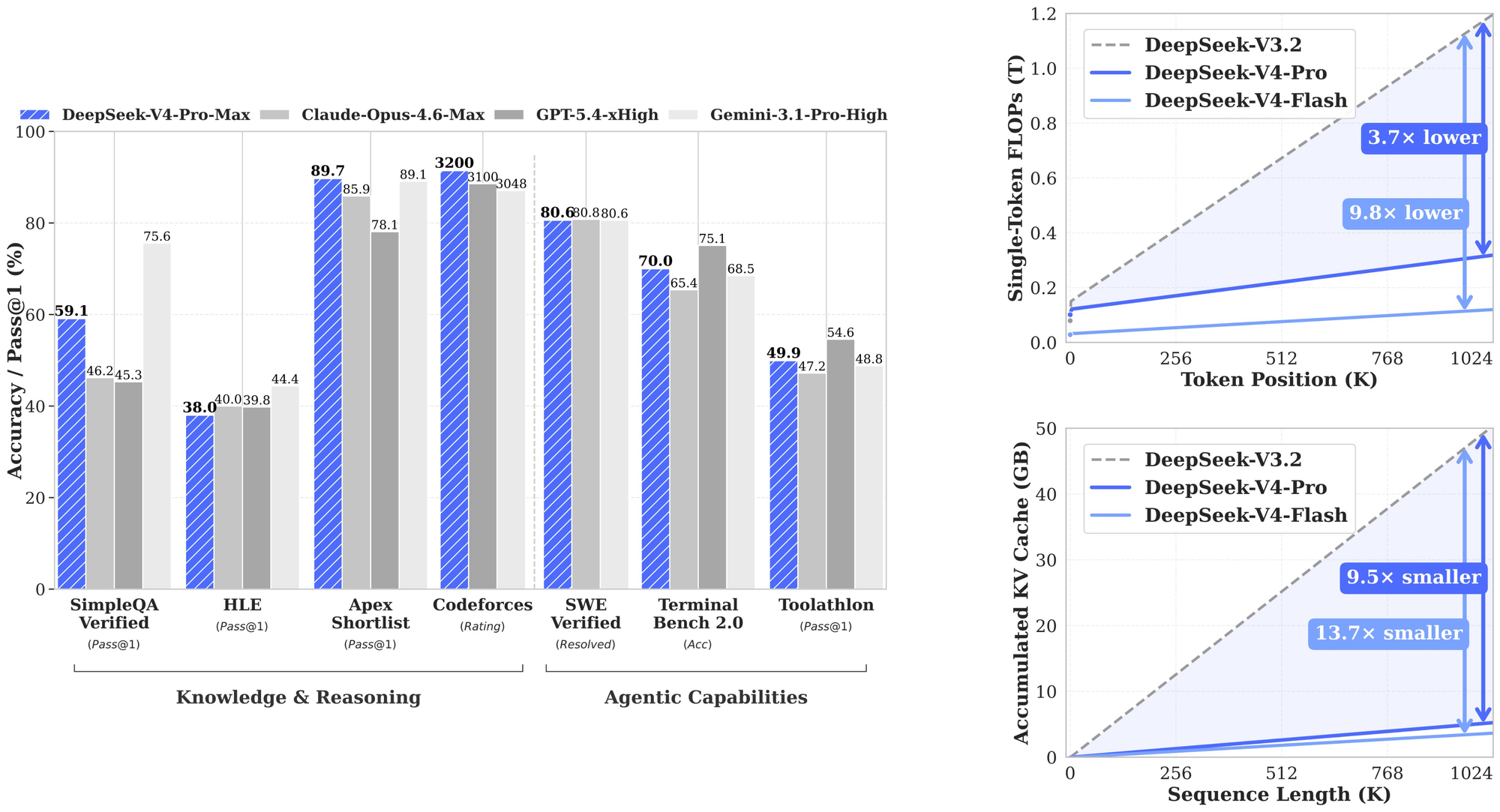

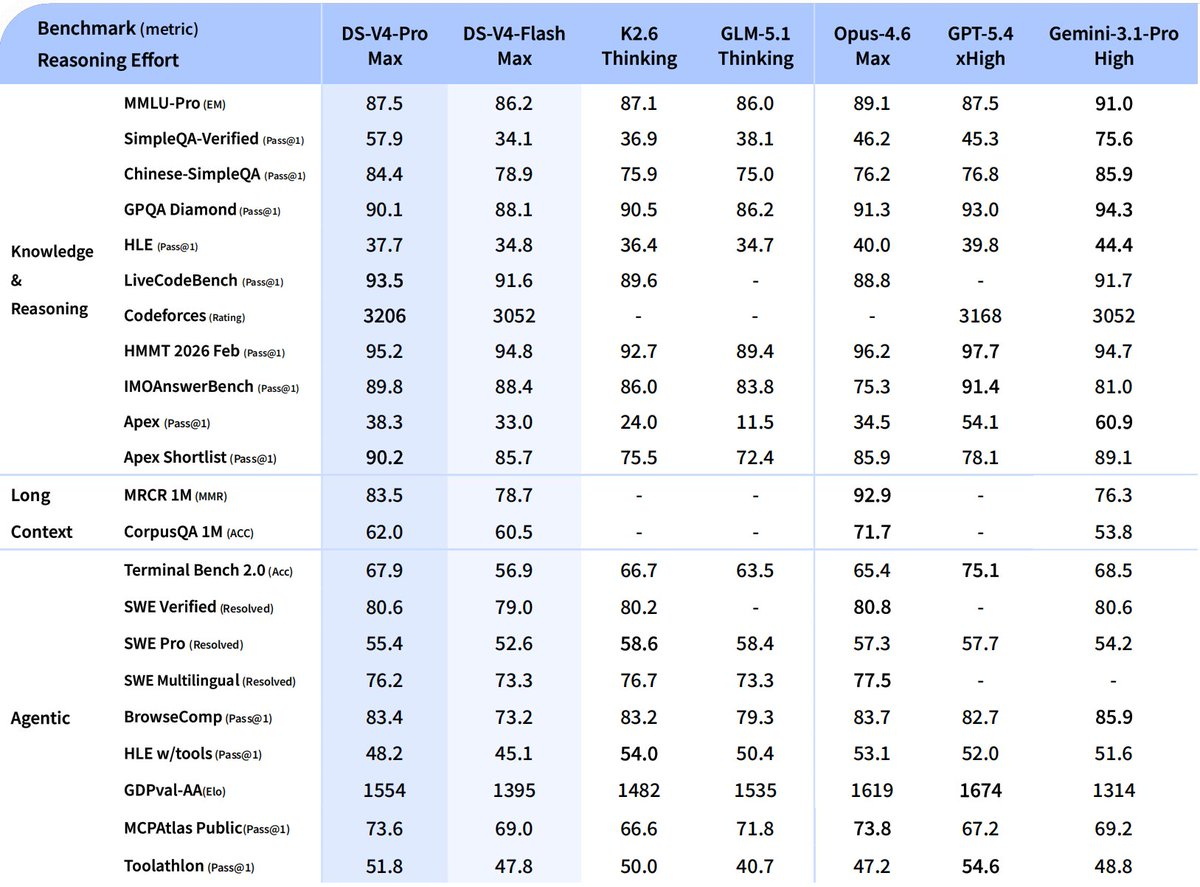

The new DeepSeek-V4-Pro packs 1.6 trillion parameters and tops open-source benchmarks in coding, math, and long-context tasks, with independent tests confirming it beats models like Gemini 3.1 Pro on web app building.

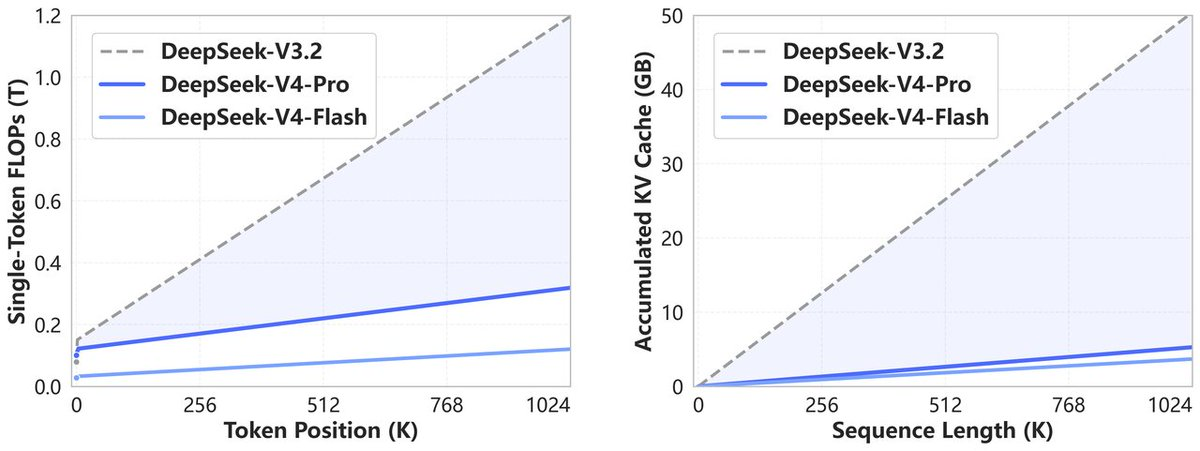

V4-Flash offers similar smarts at blazing speed and tiny cost, both supporting 1 million token contexts through clever efficiency tricks that cut memory use by 90%.

Priced 3-8x cheaper than rivals, they're available now via chat or API, with weights on Hugging Face—and even runnable on Huawei chips amid U.S. restrictions. Community leaders like Replit's Amjad Masad call it a true open breakthrough.

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length.

— DeepSeek (@deepseek_ai) April 24, 2026

🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models.

🔹 DeepSeek-V4-Flash: 284B total / 13B active params.… pic.twitter.com/n1AgwMIymu

DeepSeek-V4-Pro highlights -

- Enhanced Agentic Capabilities: Open-source SOTA in Agentic Coding benchmarks.

- Rich World Knowledge: Leads all current open models, trailing only Gemini-3.1-Pro.

- World-Class Reasoning: Beats all current open models in Math/STEM/Coding, rivaling top closed-source models.

DeepSeek-V4-Flash highlights

- Reasoning capabilities closely approach V4-Pro.

- Performs on par with V4-Pro on simple Agent tasks.

- Smaller parameter size, faster response times, and highly cost-effective API pricing.

Structural Innovation & Ultra-High Context Efficiency

🔹 Novel Attention: Token-wise compression + DSA (DeepSeek Sparse Attention).

🔹 Peak Efficiency: World-leading long context with drastically reduced compute & memory costs.

🔹 1M Standard: 1M context is now the default across all official DeepSeek services.

Dedicated Optimizations for Agent Capabilities

🔹 DeepSeek-V4 is seamlessly integrated with leading AI agents like Claude Code, OpenClaw & OpenCode.

🔹 Already driving our in-house agentic coding at DeepSeek.

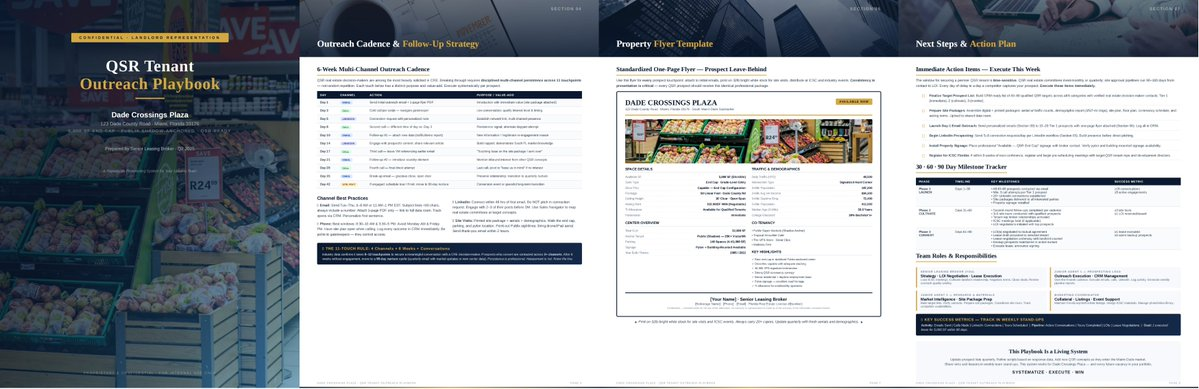

The figure below showcases a sample PDF generated by DeepSeek-V4-Pro

API is Available Today!

🔹 Keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash.

🔹 Supports OpenAI ChatCompletions & Anthropic APIs.

🔹 Both models support 1M context & dual modes (Thinking / Non-Thinking): https://api-docs.deepseek.com/guides/thinking_mode

⚠️ Note: deepseek-chat & deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). (Currently routing to deepseek-v4-flash non-thinking/thinking).

Please don't forget to subscribe and share this on your socials to help me further write such tech updates.

Or you can donate to me on GitHub Sponsors -